TL;DR

MCP gateways are quickly becoming a foundational layer for scaling AI agents in production. They act as a centralized control point between agents and external services, managing authentication, observability, and governance for tool usage. This guide reviews five notable MCP gateway platforms: Bifrost, Docker MCP Gateway, TrueFoundry, Kong AI Gateway, and Lasso Security. Each platform addresses different production requirements, from ultra-low-latency routing to enterprise governance and compliance-focused security.

Why MCP Gateways Matter in Production

The Model Context Protocol (MCP), introduced by Anthropic in late 2024, provides a standardized way for AI agents to discover and execute external tools. Instead of building custom integrations for every API or service, developers expose functionality through MCP servers, allowing agents to interact using a consistent JSON-Remote Procedure Call(RPC) interface. JSON-RPC is a JSON-based protocol that allows programs to trigger actions on remote systems efficiently and without maintaining a continuous connection.

However, deploying MCP servers directly in production quickly introduces operational complexity. Every server may have its own authentication scheme, rate limits, and logging approach. When multiple teams and dozens of tools are involved, maintaining direct connections becomes difficult to manage and risky from a security perspective.

An MCP gateway solves this by sitting between your agents and tool servers. It acts as a unified entry point that governs every tool invocation. Through the gateway, organizations can enforce access controls, capture metrics and audit logs, and introduce advanced capabilities such as caching or orchestration. As a result, choosing the right MCP gateway has become as important as selecting the right LLM provider.

Below are five production-ready MCP gateways worth considering.

1. Bifrost

Platform Overview

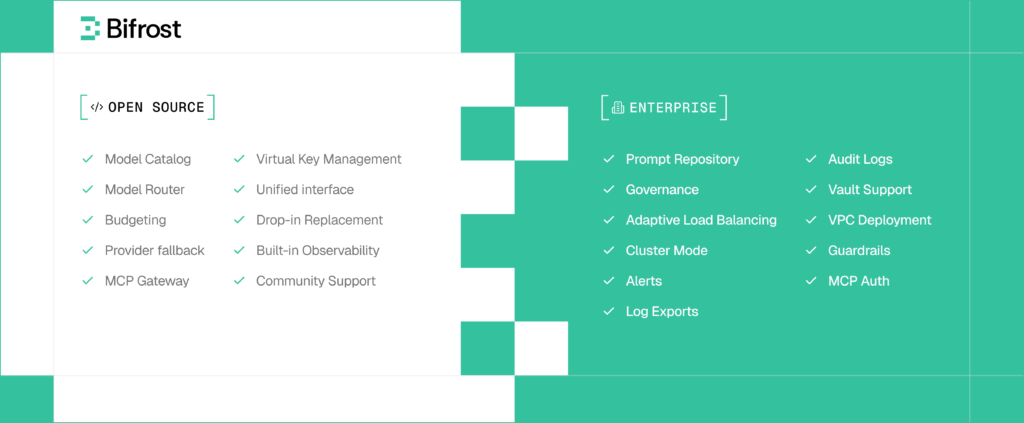

Bifrost is an open-source, high-performance AI gateway written in Go by Maxim AI. Originally designed as an LLM gateway with less than 11 microseconds of overhead at 5,000 requests per second, it has expanded into a full MCP gateway that manages both LLM routing and tool execution within a single control plane.

One of Bifrost’s defining characteristics is its bidirectional MCP architecture. It operates both as an MCP client, connecting to tool servers through STDIO, HTTP, or SSE, and as an MCP server that exposes tools to external applications like Claude Desktop. This dual capability allows Bifrost to function as the central coordination layer for AI agent systems.

Features

- Security-first tool execution

Tool calls are not automatically executed. Instead, execution must occur through the /v1/mcp/tool/execute endpoint, ensuring explicit control over potentially sensitive actions. Agent Mode can be enabled selectively for trusted tools. - Code Mode

A distinctive feature that can reduce token usage by more than 50% and lower execution latency by 40 to 50 percent compared to traditional MCP. With Code Mode, the AI generates Python code that orchestrates tools inside a sandbox rather than exposing large tool lists directly to the LLM. - Governance and virtual keys

Administrators can create separate virtual keys for teams or projects. Each key has its own rate limits, budgets, and tool permissions. This simplifies scenarios such as isolating engineering resources from marketing tools or enforcing spending limits. - Full LLM gateway capabilities

Includes automatic failover, provider load balancing, semantic caching, and a unified API supporting 1000+ models across 20+ providers. Because Bifrost manages both model routing and MCP execution, teams can avoid fragmenting their AI infrastructure. - Production observability

Built-in OpenTelemetry support, a native dashboard, and detailed request logging provide strong monitoring capabilities. Teams using Maxim AI for evaluation can integrate directly to gain visibility from tool execution through output quality analysis. - Zero-config deployment

Getting started is simple. Run npx -y @maximhq/bifrost or docker run -p 8080:8080 maximhq/bifrost. A local web interface allows visual configuration of providers and MCP servers.

Best For

Teams building user-facing AI agents that require low latency, strong governance, and unified management of both LLM routing and MCP tools. It is particularly useful for organizations already using Maxim for evaluation workflows.

Read more Freed AI Review: The Ultimate Guide for Clinicians

2. Docker MCP Gateway

Platform Overview

Docker MCP Gateway is an open-source implementation that treats MCP servers as containerized workloads. Each server runs in a dedicated Docker container with strict resource boundaries. This design makes it well suited for container-native environments.

Features

- Isolation through containerized MCP servers with restricted CPU, memory, and network access

- Built-in secrets management through Docker Desktop integration

- Dynamic discovery and management of MCP servers with commands such as mcp-find and mcp-add

- Strong supply-chain security through cryptographically signed images and provenance checks

- A unified endpoint that exposes multiple MCP servers from curated catalogs

3. TrueFoundry MCP Gateway

Platform Overview

TrueFoundry extends its broader AI platform to include MCP gateway functionality. The system combines model serving and tool execution in a single management interface.

Features

- Sub-3 millisecond latency using in-memory authentication and rate limiting

- MCP Server Groups for logical separation between teams or projects

- Centralized observability covering both LLM calls and tool executions

- A built-in playground for testing agent-to-tool workflows

4. Kong AI Gateway

Platform Overview

Kong applies its established API gateway expertise to MCP by introducing enterprise-grade governance and security features for agent-tool communication.

Features

- MCP Registry for centralized tool discovery and catalog management

- OAuth 2.1 enforcement aligned with the latest MCP specification

- Granular authorization policies for individual tools using Kong plugins

- Integration with Kong Konnect for unified management of APIs and MCP services

5. Lasso Security

Platform Overview

Lasso Security approaches MCP gateways from a security and compliance perspective. Its platform emphasizes detailed monitoring, auditing, and threat detection for agent-driven workflows.

Features

- Real-time threat detection for tool invocations with anomaly monitoring

- Comprehensive audit logs tailored for regulated sectors such as healthcare and finance

- Detailed security telemetry capturing every agent action

- SOC2-aligned governance controls suitable for enterprise deployments

Read more StoryCV Review: Can AI Actually Improve Your Resume Content?

Making the Right Choice

The best MCP gateway depends largely on your operational priorities:

| Priority | Recommended Gateway |

| Low latency with unified LLM and MCP management | Bifrost |

| Container-native isolation | Docker MCP Gateway |

| Integrated AI infrastructure platform | TrueFoundry |

| Extending existing API governance | Kong AI Gateway |

| Compliance-focused security monitoring | Lasso Security |

For many teams deploying production AI agents, the choice often comes down to whether a unified infrastructure layer is needed for both LLM routing and tool execution. This is an area where Bifrost stands out. In other cases, a specialized solution focused on container isolation or compliance may be more appropriate.

Regardless of the gateway selected, combining it with a strong AI evaluation and observability platform helps ensure that agents are not only connected to the right tools but also delivering reliable, high-quality outputs in production.